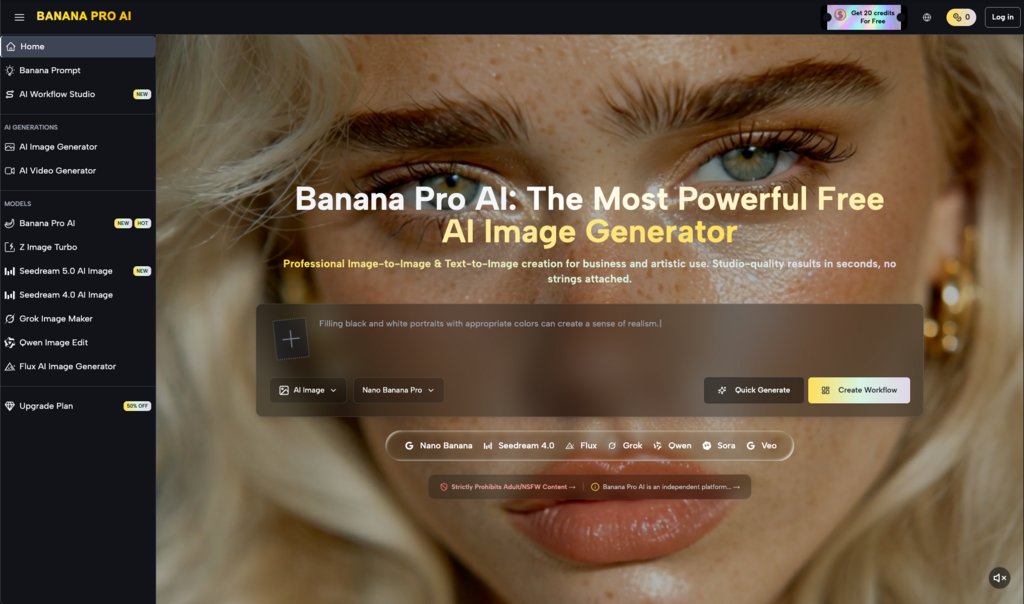

In the fast-paced world of digital media, creators often find that traditional software cannot keep up with the demand for instant, high-quality content. This delay between inspiration and execution often leads to missed market trends and diminished brand impact. To address these inefficiencies, the AI Photo Editor known as Nano Banana 2 was introduced, marking a significant milestone in early 2026 for the Gemini image generation ecosystem. This system integrates powerful features like text-to-image generation, precise image-to-image editing, and a revolutionary approach to rendering clear, readable text within visuals.

The struggle to maintain a consistent visual voice across multiple platforms is a common pain point for modern marketing teams. Without a centralized system for style control, campaigns often feel fragmented and unprofessional. Nano Banana 2 solves this by bridging the gap between raw algorithmic power and the precise needs of a professional designer. In my testing, the transition from broad generative concepts to specific, controlled adjustments is what sets this system apart. It allows users to move beyond the unpredictability of early AI tools into a more structured environment where the human designer remains the primary architect of the visual narrative.

Moving Beyond Random Generation Toward Precise Contextual Image Editing

The primary limitation of traditional generative models has been their focus on creating images from a blank canvas. While this is useful for brainstorming, it often fails in professional scenarios where an existing asset needs a specific update. For example, if a photographer needs to change the lighting of a scene or swap a background while keeping the subject identical, a standard generator would likely force a complete and unwanted redesign. Nano Banana 2 changes this dynamic by offering a dual-path architecture that prioritizes both the creation of new imagery and the sophisticated transformation of existing files.

In my observation, the image-to-image capability is where the platform truly shines for professional workflows. It treats the uploaded photo as a structural guide rather than just a loose suggestion. This means that details such as human anatomy, perspective, and core composition are preserved while the AI applies new styles, colors, or textures. This level of intentionality is essential for e-commerce and editorial work, where the integrity of the original subject must be maintained even as the surrounding environment is reimagined to fit a new campaign theme.

Overcoming Common Limitations of Legible Text in Generative Environments

A recurring frustration in the industry has been the “garbled text” problem found in most AI-generated graphics. For years, designers have had to manually overlay text using secondary editing tools because generative models could not produce coherent typography. This disconnect often results in a visual clash between the rendered image and the superimposed font. Banana Pro addresses this by treating text as a native component of the generation process, ensuring that letters and symbols interact naturally with the shadows, reflections, and textures of the background.

The system’s ability to render crisp, aligned typography represents a significant technical leap for the industry. I have observed that this feature is particularly stable when generating posters, invitations, and branded content where readability is non-negotiable. By allowing typography to be baked into the image, the final output feels like a single, cohesive piece of design rather than a collection of disparate elements. This capability drastically reduces the time required to produce production-ready marketing assets.

Enhancing Commercial Graphics with Native High Definition Typography Rendering

When we look at the requirements for a high-impact logo or promotional banner, the clarity of the message is paramount. The native text rendering within the platform ensures that brand names and headlines are sharp even at higher resolutions. In my tests, the system handled various font styles and placements with a level of accuracy that was previously reserved for manual graphic design work. This makes the platform an invaluable asset for small business owners and independent creators who need professional results without the overhead of a full design agency.

Implementing the Official Strategic Workflow for Professional Content Creation

Successfully integrating AI into a production cycle requires more than just access to the technology; it requires a logical operational framework. The interface of Nano Banana 2 is designed to minimize the complexity of the creative process, guiding the user through a series of structured decisions. This predictable workflow is essential for agencies that need to manage multiple projects under tight deadlines. Based on the official guidelines, the process can be broken down into four distinct stages that ensure the final product meets professional standards.

It is important to remember that while the system is highly automated, the quality of the final output is a direct reflection of the clarity of the user’s initial instructions. By following the official protocol, you can ensure that the AI understands your creative intent and produces a result that requires minimal post-production. The following steps represent the core journey from an initial concept to a finished digital asset.

Step 1: Choose Image to Image or Text to Image Mode

The first step is to define the starting point of your project. Select Text to Image if you are generating a visual from a written description. Choose Image to Image if you intend to use the AI Photo Editor to modify, restyle, or refine an existing photograph or graphic.

Step 2: Describe Your Idea with Detailed Prompts

Input your vision into the prompt box. For the best results, be specific about the subject, environmental lighting, and any text that needs to be included. The more descriptive the prompt, the more accurately the system can interpret your specific artistic requirements.

Step 3: Click Generate and Review the Output

Initiate the generation engine. Within a few seconds, the platform will produce a high-fidelity image based on your parameters. In my experience, the speed of this stage allows for a highly iterative process where you can quickly test different creative directions.

Step 4: Download and Export the Professional Asset

Review the final generation for quality and accuracy. Once satisfied, you can download the high-resolution file in the desired aspect ratio. The image is now ready for use in social media, marketing campaigns, or web layouts.

Utilizing Reference Based Style Transfer to Maintain Unified Brand Identities

For established brands, the aesthetic consistency of their content is their most valuable asset. If every image in a campaign looks slightly different, the brand identity becomes diluted. The style transfer feature within the platform allows users to upload a reference image that serves as a visual guide for the AI. This ensures that every subsequent generation follows the same color palette, lighting texture, and overall mood as the original brand asset.

| Workflow Comparison | Standard AI Tools | Nano Banana 2 AI |

| Text Reliability | Often unreadable symbols | Clear and native typography |

| Edit Control | Limited to full redraws | Targeted image-to-image editing |

| Style Consistency | High variance between results | Precise reference style transfer |

| Size Versatility | Fixed aspect ratios | Diverse multi-size adaptation |

| Speed of Iteration | Slow due to random results | Fast via controlled modification |

Synchronizing Global Visual Assets Across Diverse Marketing Channels

The ability to maintain a unified visual voice is particularly useful for global campaigns where different teams may be producing content simultaneously. By using a shared reference image, a team in one region can ensure their visuals perfectly match those created in another. I have found that this feature is most effective when used with clear, high-contrast reference photos that define a brand’s unique lighting and aesthetic signature. It provides a level of certainty that is often missing from purely prompt-based tools.

Evaluating System Limitations and the Evolving Role of the Digital Designer

As we move deeper into the era of neural image engineering, it is crucial to maintain a grounded understanding of the technology’s current boundaries. While Nano Banana 2 represents a major leap forward, it is not a complete replacement for human judgment. The output remains deeply dependent on the quality and specificity of the user’s prompts. In some complex scenarios, you may find that achieving the exact intended result requires two or three iterations of the prompt or slight adjustments to the configuration settings.

In my testing, I have observed that while the system excels at style transfer and text rendering, extremely niche or abstract concepts may still require more detailed guidance than standard requests. Furthermore, the AI acts as a powerful collaborator, but the “creative eye”—the ability to recognize what makes a composition successful—remains a uniquely human skill. Understanding these limitations is the key to using the platform effectively; it is a tool meant to amplify productivity and creativity, not to automate them into obsolescence.

The future of digital content creation lies in the seamless collaboration between human intuition and machine efficiency. By embracing tools that offer greater control and precision, designers can reclaim the time they once spent on technical drudgery and refocus it on the strategic storytelling that truly drives brand value. Nano Banana 2 is a significant step toward this future, providing the features and flexibility needed to thrive in an increasingly visual economy.