Building a recognizable brand or a compelling narrative depends heavily on visual consistency, yet this is precisely where many digital creators struggle when experimenting with new technologies. When a character’s face or features shift between scenes, the audience’s immersion is broken, and the perceived trust in the content begins to erode Character continuity. This “identity drift” is a common byproduct of early-stage generative AI, making it difficult to use these tools for professional storytelling or long-term marketing campaigns. Seedance 2.0 directly addresses this critical pain point by prioritizing character preservation through specialized neural modules. By ensuring that the digital persona remains stable across diverse animation cycles, the platform enables creators to build cohesive visual worlds without the constant fear of technical inconsistency.

The evolution of generative video has moved beyond the “novelty” phase and is now entering a stage where reliability is the primary metric of success. In my observation, the ability to take a single character design and place it into multiple high-energy or subtle scenarios while keeping the face unmistakably the same is a game-changer for the industry. This level of control allows for the creation of serialized content, such as web series or recurring social media ads, where the character becomes a familiar face to the audience. While the process still requires a thoughtful approach to prompting, the underlying framework provides a much more stable foundation for brand-building than general-purpose video generators that lack identity-locking features.

Solving The Identity Drift Problem In Automated Animation Cycles

The core challenge of identity preservation in AI is ensuring that the model remembers the “anchor” features of the subject while it calculates the complex physics of motion. In simpler models, the focus on movement often comes at the expense of detail, leading to the subject looking like a slightly different person in every frame. The platform overcomes this by using a dual-pathway processing system: one path focuses on the fluid dynamics of the motion, while the other maintains a constant reference to the original character’s features.

Utilizing Multimodal Alignment For Precise Facial Feature Retention

This precision is made possible through multimodal alignment, where the system cross-references the textual instructions with the visual data of the source image. When the AI is told to make a character dance or speak, it doesn’t just animate a generic human form; it maps those movements onto the specific bone structure and facial landmarks of the uploaded subject. In my testing, this results in a much more convincing performance, as the expressions feel authentic to that specific character. This is particularly noticeable in the “Talking Avatar” feature, where the lip-syncing and eye movements are synchronized to the character’s unique facial geometry.

Observing Expression Accuracy During High Dynamic Movement Scenarios

Dynamic movements, such as jumping or dancing, provide the ultimate test for character consistency. During these sequences, the subject’s face is often seen from different angles and under varying lighting conditions. In my observation, the system does an admirable job of maintaining the character’s “essence” even during fast-paced segments. While there can occasionally be a slight softening of details during the most rapid motions, the overall identity remains remarkably intact. This level of stability is essential for creators who want to use AI to produce music videos or high-energy social media content without sacrificing the integrity of their digital persona.

Technical Strategies For Reducing Character Morphing In Long Clips

Longer video clips naturally provide more opportunities for the AI to lose track of the subject’s identity. To counter this, the platform utilizes a temporal consistency check that looks at both preceding and succeeding frames to ensure a smooth transition. This prevents the “morphing” effect where a character’s hair or clothing might slowly change color or texture over the course of a ten-second clip. By enforcing these strict visual boundaries, the system ensures that the final output feels like a single, continuous recording rather than a series of loosely related images stitched together.

Crafting Narrative Cohesion Through Consistent Digital Persona Animation

Consistency is the bedrock of storytelling. For a narrative to be effective, the audience must believe in the characters and their journey. Using AI to generate these characters offers incredible creative freedom, but only if those characters can be relied upon to look the same in every scene. The platform’s focus on identity allows creators to move from producing “one-off” viral clips to developing full narratives. This opens the door for independent filmmakers and brand storytellers to create professional-quality animated content with a fraction of the traditional animation budget.

Integrating Character Consistency Into Multi Shot Storytelling Workflows

A typical narrative sequence involves multiple shots from different perspectives—a close-up for emotion, a wide shot for context, and a medium shot for action. The challenge for AI is ensuring the character looks identical across all these different shots. In my observation, the platform’s ability to “remember” character traits across different generations is its most powerful asset for storytellers. By using the same reference image as a baseline for every shot, creators can build a library of clips that fit together perfectly in the editing room, creating a seamless viewing experience for the audience.

The Evolution Of Digital Humans In Virtual Brand Ambassadorship

Many brands are now moving toward “digital humans” as their primary ambassadors, as they offer more control and flexibility than human influencers. However, a digital human is only effective if they have a consistent and recognizable appearance. The tools provided here allow brands to generate an infinite variety of content—from holiday greetings to product tutorials—using the same digital persona. This creates a sense of familiarity and trust with the audience, as they begin to recognize the brand’s ambassador across different platforms and campaigns.

Addressing The Limitations Of Contextual Lighting In Synthetic Environments

One area where AI still faces challenges is the perfect integration of a character into complex environmental lighting. If a character is moving from a brightly lit outdoor scene to a dimly lit interior, the AI must recalculate how the light hits their specific features. While the system is highly capable of simulating these changes, there can sometimes be a slight mismatch in the shadows or reflections. In my experience, these issues can usually be resolved by providing more detailed prompts that describe the lighting environment, but it remains a technical boundary that creators should keep in mind when planning their shots.

A Practical Guide To Consistent Character Generation

To ensure your character remains consistent across multiple videos, it is best to follow a standardized process.

- Select a Definitive Reference Image: Choose one high-quality image of your character that clearly shows their face and key features. This image will serve as the “gold standard” for all subsequent generations.

- Use Consistent Prompting Keywords: When writing your prompts for different scenes, always use the same descriptors for the character’s physical traits (e.g., “blue eyes,” “short silver hair,” “leather jacket”). This helps the AI maintain a consistent internal model of the subject.

- Iterate and curate for narrative flow: Generate multiple takes for each shot and select the ones that have the highest level of identity retention. Use the 1080p output to check for fine details and ensure that the character’s movements are consistent with their personality in previous clips.

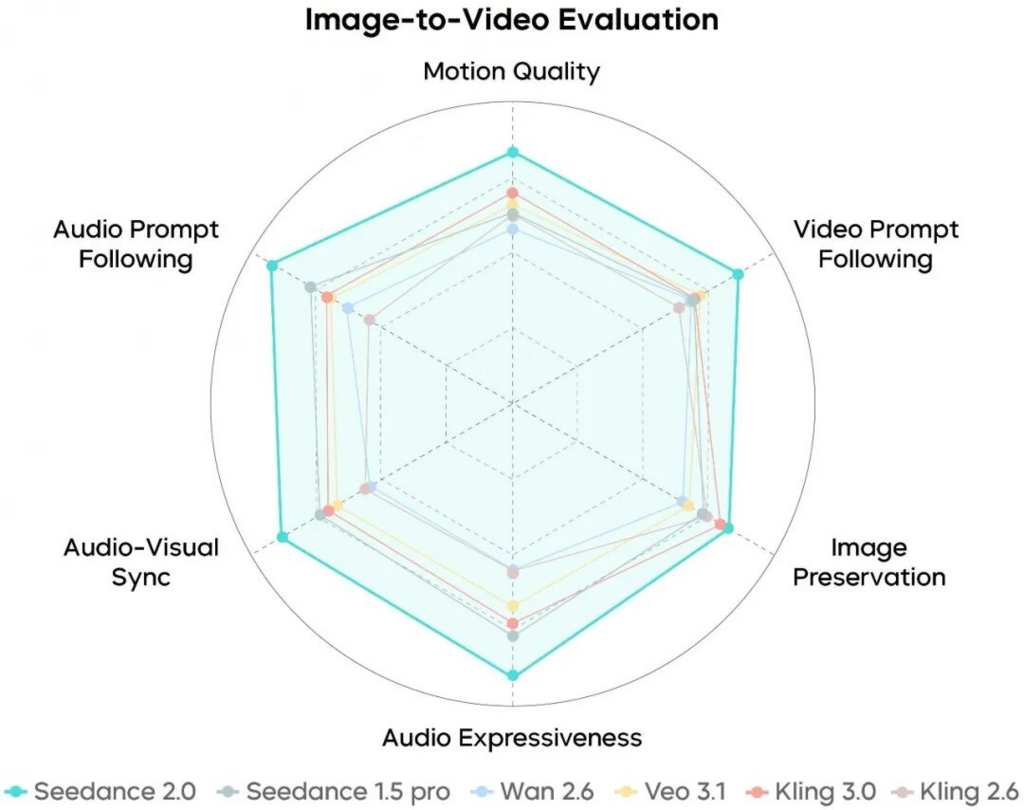

Comparing Traditional Character Animation With AI Driven Solutions

The following table explores the differences in how character consistency and motion are handled across different production environments.

| Production Element | Traditional 3D Animation | Seedance 2.0 AI Video |

| Character Setup Time | High (Modeling & Rigging) | Zero (Image-Based Baseline) |

| Motion Authoring | Manual Keyframing | Automated Neural Synthesis |

| Identity Stability | Mathematically Guaranteed | Neural Feature Retention |

| Lighting Integration | Manual Shader Setup | Automated Scene Interpretation |

| Revision Speed | Low (Render-Intensive) | High (Real-Time Generation) |

| Production Skillset | Deep Technical Expertise | Creative Direction and Prompting |

The choice between these methods depends on the specific needs of the project. For a big-budget animated feature, the absolute control of 3D animation is still required. However, for the vast majority of digital marketing, social media, and independent narrative projects, the speed and consistency of AI-driven solutions offer a much more viable path forward. The goal is to leverage the efficiency of the AI to handle the volume of content required by modern audiences, while maintaining the high standards of character and brand identity that define successful storytelling. As we look to the future, the ability to effortlessly manage digital personas will be a core skill for every visual communicator.

Ready to go deeper? Here is your next tactical step at 2A Magazine.